To build a GPU cluster, you can distribute GPU nodes across devices deployed at the edge, enabling low-latency AI inference. GPU clusters can be built by joining GPUs from multiple distributed nodes into one cluster.

Additionally, you have the option to build your own GPU cluster or outsource to a cloud provider. GPU clusters offer the advantage of more cores, but they are less efficient and offer less precision compared to CPU cores. If you prefer to use a GPU server, there are several options available including Google Colaboratory, Kaggle, Amazon SageMaker Studio Lab, Gradient by Paperspace, and Microsoft Azure for Student Account.

Understanding Gpu Clusters

Building a GPU cluster for AI inference allows for low-latency performance by joining GPUs from multiple distributed nodes. With careful consideration of process and hardware options, it is possible to build your own GPU cluster or opt for outsourcing to a cloud provider.

What Is A Gpu Cluster?

A GPU cluster is a network of interconnected graphic processing units (GPUs) that work together to perform high-performance computing tasks. These clusters are designed to handle data-intensive workloads, such as artificial intelligence (AI) and machine learning algorithms, that require massive parallel processing.Why Build A Gpu Cluster?

Building a GPU cluster offers several advantages over using a single GPU. The main reason is the enhanced computational power that comes with combining multiple GPUs. By harnessing the processing power of multiple GPUs in parallel, a GPU cluster can significantly accelerate the execution time of complex computations.Benefits Of Using A Gpu Cluster For Ai And Data-intensive Workloads

Increased processing speed: GPU clusters can handle vast amounts of data and perform complex calculations simultaneously, resulting in faster processing times. 2. Scalability: GPU clusters are highly scalable, allowing you to add or remove GPUs as needed. This flexibility enables you to adapt to changing computational demands. 3. Cost-efficiency: Despite the initial investment in building a GPU cluster, it can be more cost-effective in the long run. With improved processing speed, you can accomplish tasks faster, reducing the overall time and cost required. 4. Parallel processing capabilities: GPUs excel at parallel processing, making them ideal for AI and data-intensive workloads. By distributing the workload across multiple GPUs, a GPU cluster can handle large datasets and complex models more efficiently. 5. Lower latency: GPU clusters can be distributed, with GPUs spread across devices deployed at the edge. This arrangement enables AI inference with very low latency, making it possible to process real-time data quickly. In summary, building a GPU cluster offers increased processing speed, scalability, cost-efficiency, parallel processing capabilities, and lower latency. These benefits make GPU clusters a valuable tool for AI development and data-intensive workloads. By harnessing the combined power of multiple GPUs, organizations can accelerate their computational tasks and achieve faster results.Planning Your Gpu Cluster Setup

When it comes to setting up a GPU cluster for AI workloads, careful planning is essential to ensure optimal performance and efficiency. In this section, we will delve into the various aspects you need to consider when planning your GPU cluster setup.

Assessing Ai Cluster Requirements

Before building your GPU cluster, it’s important to assess your specific AI cluster requirements. This involves evaluating the computational needs of your AI workloads and determining the number of GPUs necessary to achieve the desired performance.

Factors to consider include the complexity of your AI models, the size of your datasets, and the expected workload concurrency. By conducting a thorough assessment, you can ensure that your GPU cluster provides enough computational power to handle the workload efficiently.

Determining Storage Capacity Needs

Another crucial aspect of planning your GPU cluster setup is determining the storage capacity needs. AI workloads often require large amounts of data to be stored and processed. Thus, it is essential to consider the storage requirements for your dataset, intermediate results, and model checkpoints.

To determine the storage capacity needs, you should consider the size of your datasets, anticipated growth, and the required performance characteristics. It’s essential to choose storage solutions that provide fast access and sufficient capacity to avoid storage bottlenecks.

Choosing The Right Networking Options

The networking infrastructure plays a vital role in the performance and scalability of your GPU cluster. When choosing the right networking options, consider factors such as latency, bandwidth, and network topology.

Low-latency networking is crucial for AI workloads, as it minimizes communication delays between GPUs and facilitates faster data exchange. Additionally, high-bandwidth networks are essential for handling the massive amounts of data involved in AI processing.

Furthermore, understanding the network topology and its impact on performance is essential. Topologies like non-blocking, fat-tree, or torus can provide high bisection bandwidth and minimize communication overhead, leading to improved GPU cluster performance.

Understanding Node Topology And Its Impact On Performance

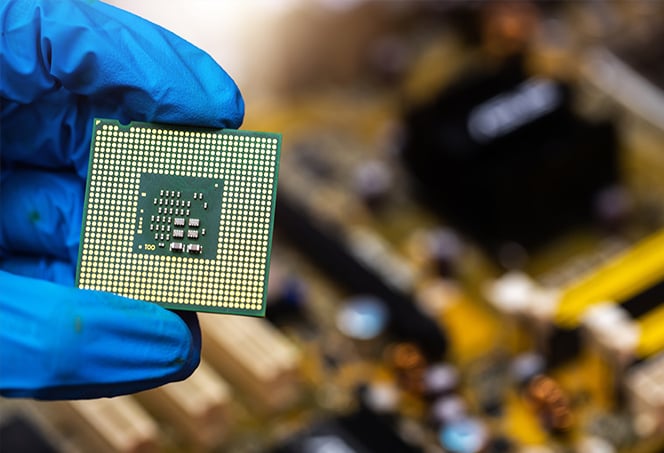

The node topology refers to the physical arrangement of GPUs within a node and its impact on performance. Understanding the node topology is crucial for optimizing the performance of your GPU cluster.

The GPU interconnect technology, such as NVIDIA NVLink or PCIe, determines the communication bandwidth between GPUs. Choosing a node with a high-bandwidth interconnect can significantly improve the performance of GPU-to-GPU communication.

Additionally, the placement of GPUs on the node can also affect performance. Whether they are connected directly or through a switch can impact latencies and communication efficiency. Considering these factors when designing the node topology can help maximize the performance of your GPU cluster.

Designing The Rack And Hardware Configuration

Designing the rack and hardware configuration is a crucial step when building a GPU cluster. This involves carefully considering various factors such as rack TDP, rack elevation design, PCIe device selection, and optimizing airflow and cooling. Let’s delve into each of these aspects.

What Is Rack Tdp And Its Importance?

Rack TDP, or Total Design Power, refers to the maximum power that a rack can consume. It is an essential consideration as exceeding the rack’s power capacity can lead to system instability and potential hardware failures. Additionally, higher power consumption can result in increased cooling requirements and energy costs. When selecting GPUs and other hardware components for your cluster, it’s crucial to ensure that the cumulative TDP of all devices does not exceed the rack’s specified power limit. This ensures optimal performance and reliability.

Rack Elevation Design Considerations

When designing the rack elevation, it’s essential to consider factors such as size, weight, and thermal management of the hardware components. A well-planned rack elevation design allows for efficient space utilization, easy accessibility for maintenance and upgrades, and proper airflow for cooling. It is recommended to follow industry-standard rack spacing guidelines and incorporate features like cable management systems and proper ventilation to ensure the longevity and performance of the GPU cluster.

Tradeoffs To Consider When Selecting Pcie Devices

When selecting PCIe devices for your GPU cluster, there are various tradeoffs to consider. These include factors such as bandwidth requirements, cost, form factor compatibility, and future scalability. Choosing the right PCIe devices, such as GPUs, network adapters, and storage controllers, is crucial to achieve optimal performance and meet the specific requirements of your workload. It’s essential to evaluate the tradeoffs carefully and select the devices that best align with your cluster’s goals and budget.

Optimizing Airflow And Cooling For The Cluster

Proper airflow and cooling are critical for maintaining the stability and performance of a GPU cluster. Overheating can lead to thermal throttling, reduced lifespan of components, and even system failure. To optimize airflow and cooling, consider factors such as rack layout, arrangement of hardware components, strategic placement of fans or cooling systems, and proper cable management. Ensuring an efficient cooling system allows the GPUs and other hardware to operate at optimal temperatures, maximizing performance and minimizing the risk of overheating.

Setting Up And Configuring Your Gpu Cluster

Learn How to Build and Configure Your GPU Cluster for efficient AI inference and low latency. Explore the process and hardware options to set up your own GPU cluster or outsource to a cloud provider. Avoid the hassle of overused phrases and discover the best ways to create a powerful GPU cluster.

Module System And Conda Installation

Before setting up and configuring your GPU cluster, it is crucial to ensure that you have the necessary software modules and tools in place. One popular approach is to use a module system like Environment Modules to manage software versions, dependencies, and environments. Additionally, Conda, a package manager and environment management system, can be used to install and manage software packages specific to your GPU cluster setup.

With the module system and Conda installed, you can easily switch between different software configurations and environments, allowing for seamless management of your GPU cluster.

Loading The Environment For Cluster Usage

After setting up the module system and installing Conda, the next step in configuring your GPU cluster is loading the appropriate environment for cluster usage. This allows you to access the necessary libraries and software tools required for running GPU-accelerated applications.

To load the environment for cluster usage, you can simply use the respective commands provided by the module system and Conda. These commands ensure that the required software packages and dependencies are loaded into the environment for seamless execution of GPU tasks on your cluster.

Exploring Cluster Storage Options

When setting up your GPU cluster, it is crucial to explore and select the right storage options to meet your needs. Cluster storage options typically include local storage, network-attached storage (NAS), and distributed file systems.

Local storage refers to the storage capacity available on each individual node in the cluster. It can be used for temporary storage or caching of data that is frequently accessed by GPU-accelerated applications. Network-attached storage, on the other hand, provides shared storage accessible to all nodes in the cluster, enabling easy data sharing and collaboration among cluster users. Distributed file systems, such as Lustre or GlusterFS, offer high-performance storage solutions for large-scale GPU clusters, ensuring efficient data access and parallel processing across multiple nodes.

Uploading And Downloading Data To/from The Cluster

Once your GPU cluster is set up and the environment is loaded, you need to ensure smooth data transfer to and from the cluster. To upload data to the cluster, you can use tools like rsync, which securely transfers files between your local machine and the cluster.

Rsync provides efficient and reliable file transfer, ensuring that your data is quickly and accurately uploaded to the cluster for processing. Similarly, when it comes to downloading data from the cluster, rsync can be used to retrieve the results of your GPU-accelerated computations.

By utilizing rsync for data transfer, you can maintain data integrity and avoid any potential data loss or corruption during the transfer process. This ensures that your GPU cluster operates smoothly and efficiently, allowing you to focus on your AI and machine learning tasks.

Managing And Running Your Gpu Cluster

Once you have successfully built your GPU cluster, it is time to focus on managing and running it efficiently. Effectively managing your GPU cluster is crucial to maximize its performance and make the most out of its capabilities. In this section, we will discuss important aspects such as preparing the cluster for running AI workloads, running parallel vs sequential processes, utilizing the power of GPUs in the cluster, benchmarking and optimizing cluster performance.

Preparing The Cluster For Running Ai Workloads

Before running AI workloads on your GPU cluster, it is important to ensure that the cluster is properly prepared. This includes installing the necessary software packages, frameworks, and libraries that are required for running AI workloads. Additionally, setting up the appropriate environment variables and configurations to make sure that the cluster is ready to handle the workload efficiently.

Running Parallel Vs Sequential Processes

When it comes to running processes on your GPU cluster, one important decision to make is whether to run them in parallel or sequentially. Running processes in parallel allows for faster execution as multiple tasks are processed simultaneously. This is especially beneficial for AI workloads that involve complex computations. On the other hand, running processes sequentially may be more suitable for certain tasks that require a specific order of execution.

Utilizing The Power Of Gpus In The Cluster

One of the key benefits of a GPU cluster is its ability to harness the immense processing power of GPUs. GPUs excel at handling parallel computations, making them ideal for AI workloads. To fully utilize the power of GPUs in the cluster, it is important to optimize the workload and ensure that it is properly distributed across the GPUs. This can be achieved by using parallel programming techniques and frameworks such as CUDA or OpenCL.

Benchmarking And Optimizing Cluster Performance

Optimizing the performance of your GPU cluster is essential for achieving the best possible results. Benchmarking is a crucial step in this process as it allows you to measure the performance of your cluster and identify any bottlenecks or areas for improvement. By analyzing the benchmark results, you can optimize various aspects of the cluster such as hardware configurations, software settings, and workload distribution to maximize its performance.

In conclusion, effectively managing and running your GPU cluster involves preparing the cluster for running AI workloads, making decisions about running processes in parallel or sequentially, utilizing the power of GPUs, and benchmarking and optimizing cluster performance. By following these steps, you can ensure that your GPU cluster is performing at its best and delivering the desired results for your AI workloads.

Credit: gcloud.devoteam.com

Frequently Asked Questions For How To Build Gpu Cluster

Can You Cluster Gpus?

Yes, you can cluster GPUs to create a distributed GPU cluster that allows for low-latency AI inference. GPU clusters can be spread across devices at the edge instead of being centralized in a data center. Joining GPUs from multiple nodes into one cluster enables efficient AI processing.

What Is The Difference Between Cpu And Gpu Cluster?

A CPU cluster is ideal for serial instruction processing, while a GPU cluster has more cores but is less efficient and precise. GPUs are not suitable for serial instruction processing and are slower for algorithms that require serial execution compared to CPUs.

How To Get Free Gpu Server?

To get a free GPU server, you can try using platforms like Google Colaboratory, Kaggle, Amazon SageMaker Studio Lab, Gradient by Paperspace, or Microsoft Azure for Student Account. These platforms offer access to GPU servers for free or at a reduced cost.

How To Use Gpu Server?

To use a GPU server, you can follow these steps: 1. Choose a GPU server provider or build your own GPU cluster. 2. Install the necessary software and drivers for GPU computing. 3. Write or modify your code to utilize the GPU for computations.

4. Upload your data to the GPU server. 5. Run your code and monitor the performance of your GPU. Remember to ensure that your code is optimized for GPU computing to achieve the best results.

Conclusion

Building a GPU cluster is an effective solution for high-performance computing needs. By distributing GPU nodes across devices deployed at the edge, AI inference can be run with minimal latency. The difference between CPU and GPU clusters lies in their core efficiency and precision.

While CPUs are suited for serial instruction processing, GPUs are better for parallel processing. To build a GPU cluster, you can either do it yourself or opt for a cloud provider. Regardless of the route you choose, having a GPU cluster will enhance your computing capabilities and accelerate your research or AI applications.

Leave a Reply